AI coding models have gotten remarkably good in a short period of time. Good enough that writing code is no longer the constraint it used to be, and engineers can spend more of their time on the work that actually needs them: architecting systems, making hard design decisions, and solving problems that require deep context. That’s a big deal.

But it raises a question most teams haven’t answered yet: if writing code isn’t the bottleneck, what changes about how work moves through your team?

I built https://github.com/jthoms1/rpi-actions to explore that question. It’s a three-stage agent pipeline that takes a GitHub issue and turns it into a pull request. The whole thing runs on Claude Skills and GitHub Actions. It took me a few hours to put together, most of that spent iterating with runners. No orchestration framework. No custom infrastructure. Just Claude doing what Claude does well: reading code, reasoning about it, and writing changes.

Formalizing what already works

When I use Claude interactively, the best results come from a specific rhythm: work with the agent to research the problem, build a plan together, make sure the plan captures what I want, then let it go off and implement. Review the output later. That pattern, research then plan then implement, consistently produces better results than just pointing Claude at a problem and saying “go.”

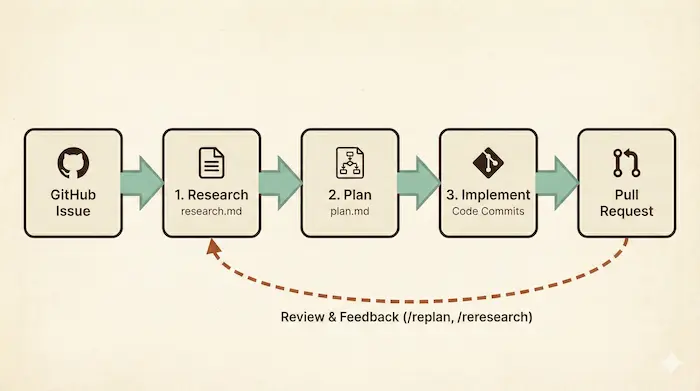

I first came across a formalized version of this through HumanLayer, and it clicked immediately because it matched what I was already doing. So I built a pipeline around it. Three sequential stages, each powered by a Claude Skill:

-

Research. The agent investigates the codebase, reads relevant files, checks constraints, and writes up its findings in a

research.mdfile. This isn’t just context gathering. It’s the agent building a mental model of the code before touching anything. -

Plan. Using the research output, the agent creates a phased implementation strategy and writes it to

plan.md. The plan is specific: which files to change, what order to make changes in, what to watch out for. -

Implement. The agent executes the plan, making actual code changes across multiple commits. Each commit maps to a phase in the plan, so reviewers can follow the logic.

Every artifact gets committed to the branch. When the PR opens, reviewers can see not just the code changes but the reasoning behind them. Research and plan documents show up right in the diff.

Even when it’s wrong, it’s useful

Here’s the question that changes how you think about this: what if you just hand a ticket to an agent and let it run all three stages on its own? It might nail it on the first try. Or it might go off the rails.

But even when it goes off the rails, there’s usually value in what it produced. The research is probably solid. The plan might be 80% right. Maybe the implementation took a wrong turn in phase three. You don’t start over. You pick up from where the agent made an incorrect assumption and correct course from there.

This is the shift in thinking. The agent handles the first pass so you can focus on the parts that need your judgment. Accept the results when they’re good. When they’re not, you’re still starting from a better place.

And that shift in thinking is exactly what shapes the feedback loop. When a reviewer spots issues in the PR, they’re not starting from scratch. They’re steering. A PR comment starting with a slash is all it takes:

/replankeeps the existing research and regenerates the plan and implementation with feedback/reresearchrestarts the entire pipeline from scratch, incorporating reviewer notes

The agent reacts with an 👀 immediately so you know the command was received. Then it goes back to work. The reviewer is directing the agent, not just accepting or rejecting its output. The same way you’d redirect a junior engineer who took a wrong turn: keep what’s good, course-correct the rest.

You don’t need much to make this work

You might expect a lot of machinery behind all this. There isn’t.

People still approach AI coding automation like it’s 2024. They reach for external orchestration frameworks, build homegrown tooling to manage agent state, and assume they need some unique hosting environment to run a coding agent. That made sense when the models needed a lot of hand-holding. It doesn’t anymore.

Claude already knows how to research a codebase. It knows how to make a plan. It knows how to implement changes. The missing piece isn’t more tooling. It’s giving Claude a structured way to do each of those things well, one at a time, with clear inputs and outputs.

That’s what Claude Skills provide here. Skills are reusable prompt modules that give Claude structured instructions for specific tasks. Think of them as specialized playbooks. Each skill in this pipeline has a clear scope, defined inputs, and expected outputs. Instead of building external orchestration to manage Claude, you let Claude manage itself through well-defined Skills. The GitHub Action workflow just calls Claude three times in sequence, passing context between stages. That’s it.

What I learned

The models are improving fast enough that the gap between “what’s possible” and “what’s easy” is shrinking every few months. When I started building this, I expected to hit walls that would require custom tooling to get around. I didn’t. The approach mattered. The tooling barely did.

That’s the real takeaway. Engineers are at their best when they’re solving problems that require real judgment. Routine implementation has always eaten into that time. Now there’s something that can take a credible first pass at most tasks. The question isn’t whether AI can write the code. It’s whether we’re willing to restructure how work flows through our teams so engineers can focus on the hard problems.

The skills and workflow I built reflect how I work and what made sense for my use case. Your team will have different constraints, different review expectations, and different risk tolerances. Fork it, change the stages, swap out the skills, add guardrails where you need them. That’s the point.

If you want to fork it, the repo is open source. Setup takes about ten minutes if you already have a GitHub repo and a Claude Code account.